The Complete Guide to A/B Testing Sample Size (2026)

Learn how to calculate the perfect sample size for statistically significant A/B test results. Includes free calculator, formulas, real examples, and best practices.

The Complete Guide to A/B Testing Sample Size (2026)

Running A/B tests without the right sample size is like flipping a coin twice and declaring the result meaningful. You need enough data to confidently know if your variation actually performs better—or if you're just seeing random noise.

This complete guide will teach you everything you need to know about sample size calculation, from basic concepts to advanced techniques. By the end, you'll be able to confidently determine exactly how many visitors you need for reliable test results.

Try Our Free Sample Size Calculator →

Why Sample Size Matters

The Cost of Getting It Wrong

Too Small: Stop your test too early, and you might:

- Implement a "winning" variation that actually performs worse

- Miss detecting a real improvement

- Waste development time on false positives

- Make decisions based on random chance, not real data

Too Large: Run your test too long, and you'll:

- Waste weeks waiting for unnecessary data

- Delay shipping improvements

- Incur opportunity costs

- Lose to faster competitors

The sweet spot? Just enough samples to detect meaningful differences with statistical confidence.

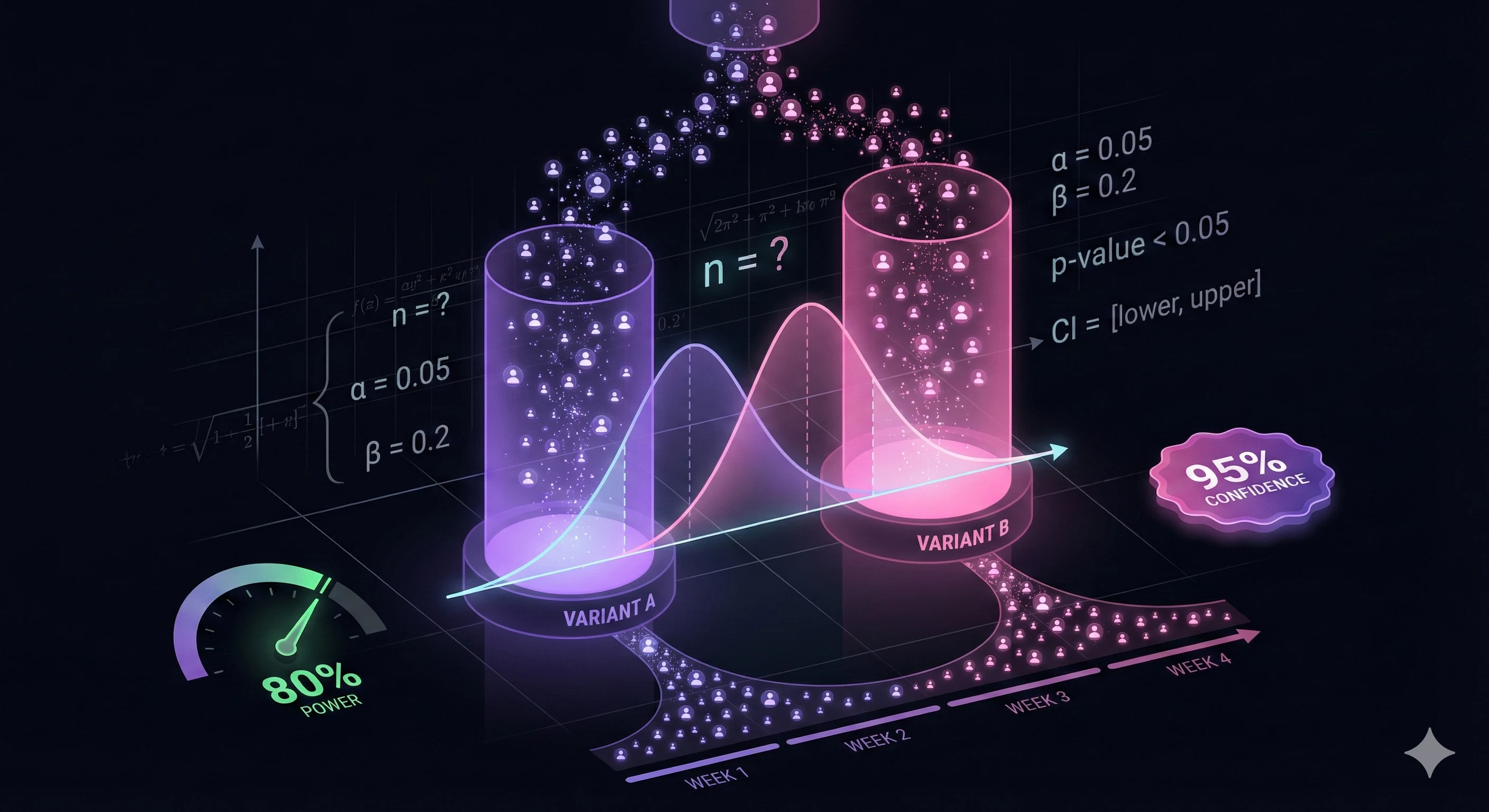

Key Statistical Concepts

Statistical Significance (α)

Statistical significance is your acceptable risk of a false positive—declaring a winner when there isn't one.

Standard: 95% confidence (α = 0.05)

This means:

- 95% confident the difference is real

- 5% chance you're seeing random variation

- Industry standard for most A/B tests

When to adjust:

- 99% confidence (α = 0.01): Critical changes (checkout flow, pricing)

- 90% confidence (α = 0.10): Exploratory tests, smaller decisions

Higher confidence requires larger sample sizes but reduces false positives.

Statistical Power (1-β)

Power is your ability to detect a real difference when one exists—avoiding false negatives.

Standard: 80% power (β = 0.20)

This means:

- 80% chance of detecting a real improvement

- 20% risk of missing a genuine effect

- Widely accepted in scientific research

When to increase:

- 90% power: Important business decisions

- 95% power: Mission-critical changes

Higher power requires more samples but ensures you don't miss real improvements.

Minimum Detectable Effect (MDE)

MDE is the smallest improvement you want to detect. It's often the most overlooked—and most important—input.

Setting realistic MDE:

- 10-20% relative improvement: Most common and achievable

- 5-10%: Requires very large samples

- 20%+: Easier to detect, might miss smaller wins

Example:

- Baseline: 2.5% conversion rate

- 20% relative improvement: 3.0% (2.5% × 1.20)

- Absolute improvement: 0.5 percentage points

Be realistic. Expecting 50%+ improvements leads to underpowered tests that run forever.

Baseline Conversion Rate

Your current conversion rate determines required sample size. Lower baselines need more samples.

Why it matters:

- 1% → 1.2% (20% improvement): ~24,000 samples per variation

- 5% → 6% (20% improvement): ~4,800 samples per variation

- 10% → 12% (20% improvement): ~2,400 samples per variation

Lower conversion rates have more variance, requiring larger samples to detect changes confidently.

The Sample Size Formula

Here's the complete formula for calculating sample size:

n = [Z_α/2 + Z_β]² × 2p(1-p) / (p₁ - p₂)²

Where:

- n = Required sample size per variation

- Z_α/2 = Z-score for significance level (1.96 for 95% confidence)

- Z_β = Z-score for power (0.84 for 80% power)

- p = Baseline conversion rate

- p₁ - p₂ = Absolute difference (MDE)

Z-Score Table:

| Confidence | α | Z_α/2 |

|---|---|---|

| 90% | 0.10 | 1.645 |

| 95% | 0.05 | 1.960 |

| 99% | 0.01 | 2.576 |

| Power | β | Z_β |

|---|---|---|

| 70% | 0.30 | 0.524 |

| 80% | 0.20 | 0.842 |

| 90% | 0.10 | 1.282 |

| 95% | 0.05 | 1.645 |

Example Calculation

Inputs:

- Baseline conversion: 2.5%

- MDE: 20% relative (3.0% absolute = 0.5 percentage points)

- Confidence: 95% (Z = 1.96)

- Power: 80% (Z = 0.84)

Calculation:

p = 0.025 p₁ - p₂ = 0.005 n = [1.96 + 0.84]² × 2(0.025)(0.975) / (0.005)² n = [2.8]² × 0.04875 / 0.000025 n = 7.84 × 0.04875 / 0.000025 n = 15,288 samples per variation

Total needed: 30,576 samples (both variations)

Or just use our calculator and skip the math: Sample Size Calculator →

Step-by-Step: Calculate Your Sample Size

Step 1: Measure Baseline Conversion Rate

Use at least 1 week of historical data:

- Account for day-of-week patterns

- Avoid holiday periods (unless testing seasonal changes)

- Ensure traffic is stable and representative

Example: 250 conversions / 10,000 visitors = 2.5% baseline

Step 2: Set Your Minimum Detectable Effect

Ask yourself: "What improvement would be worth implementing?"

Guidelines:

- New test hypothesis: Start with 10-20% relative improvement

- Refinement test: Can detect smaller (5-10%)

- Revolutionary change: Might see 30%+ improvement

Example: 20% improvement on 2.5% = 3.0% target (0.5pp absolute)

Step 3: Choose Significance Level

Standard: 95% confidence

Adjust based on risk tolerance:

- Critical path (checkout, pricing): 99%

- Standard test (copy, design): 95%

- Exploration (minor tweaks): 90%

Step 4: Set Statistical Power

Standard: 80% power

Increase for important tests:

- Business-critical decisions: 90%

- Major investments: 95%

Higher power = more samples but less risk of missing real effects.

Step 5: Account for Multiple Variations

Testing more than 2 variations? Apply Bonferroni correction to maintain overall significance:

Adjusted α = α / number of comparisons

Example: 3 variations (A vs B, A vs C, B vs C = 3 comparisons)

- Original α: 0.05

- Adjusted α: 0.05 / 3 = 0.0167

- Use 98.3% confidence instead of 95%

This increases required sample size but prevents false positives from multiple testing.

Step 6: Calculate Test Duration

Test Duration = (Sample Size × Variations) / Daily Traffic

Example:

- Sample size needed: 15,288 per variation

- Variations: 2

- Daily traffic: 500 visitors

- Duration: (15,288 × 2) / 500 = 61 days

Important: Round up and run for complete weeks (14, 21, 28 days) to account for weekly patterns.

Real-World Examples

Example 1: E-commerce Product Page

Scenario: Testing new product page layout

Inputs:

- Current conversion: 3.2%

- MDE: 15% relative improvement (3.68%)

- Confidence: 95%

- Power: 80%

- Variations: 2 (A/B test)

- Daily traffic: 800 visitors

Results:

- Sample size: 8,141 per variation

- Total needed: 16,282 visitors

- Test duration: 21 days (at 800/day)

Decision: Run test for 3 full weeks.

Example 2: SaaS Signup Flow

Scenario: Streamlining multi-step signup

Inputs:

- Current conversion: 8.5%

- MDE: 12% relative improvement (9.52%)

- Confidence: 95%

- Power: 90% (important change)

- Variations: 2

- Daily traffic: 300 visitors

Results:

- Sample size: 3,622 per variation

- Total needed: 7,244 visitors

- Test duration: 25 days

Decision: Run for 4 weeks to ensure complete week coverage.

Example 3: Email Subject Line

Scenario: A/B/C test of 3 subject lines

Inputs:

- Current open rate: 22%

- MDE: 10% relative (24.2%)

- Confidence: 95%

- Power: 80%

- Variations: 3 (A/B/C)

- List size: 50,000

With Bonferroni correction:

- Adjusted confidence: 98.3% (α = 0.0167)

- Sample size: 4,982 per variation

- Total needed: 14,946 emails

Decision: Send to 15,000 random subset, analyze after 24 hours.

Special Considerations

Multiple Variations (Bonferroni Correction)

When testing 3+ variations, you make multiple comparisons:

- 3 variations = 3 comparisons (A vs B, A vs C, B vs C)

- 4 variations = 6 comparisons

- 5 variations = 10 comparisons

Formula: k(k-1)/2 where k = number of variations

Each comparison increases false positive risk. Bonferroni correction divides your significance level by the number of comparisons.

Impact: Testing 4 variations instead of 2 can increase required sample size by 50-70%.

Recommendation: Limit to 2-3 variations per test. Run sequential tests instead of testing everything at once.

Low-Traffic Websites

Problem: Required sample size might mean 6+ month test duration.

Solutions:

1. Increase MDE: Accept detecting larger effects only

- Instead of 10% improvement, test for 25%

- Reduces sample size by ~85%

2. Test higher-traffic pages:

- Homepage instead of specific product page

- Signup flow instead of settings page

3. Combine traffic sources:

- Test across multiple similar pages

- Aggregate data carefully

4. Use sequential testing:

- Check results at predetermined intervals

- Stop early if strong signal emerges

- Requires specialized statistical methods

5. Accept longer test durations:

- Ensure test runs full weeks (1-2 minimum)

- Monitor for external changes during test

Multi-Page Funnels

Testing a funnel with multiple steps requires different approach:

Problem: Conversion rate decreases at each step

- Landing page: 10,000 visitors

- Step 2: 3,000 (30% conversion)

- Step 3: 1,200 (40% of step 2)

- Purchase: 300 (25% of step 3)

- Overall: 3% conversion

Solution: Calculate based on the final conversion rate (3%), not individual steps.

Sample size amplifies through the funnel—you need more top-funnel traffic than you might expect.

Mobile vs. Desktop

Different devices often have different conversion rates and user behavior:

Approach 1: Segment analysis

- Run test on all traffic

- Analyze mobile and desktop separately post-test

- Requires larger sample size

Approach 2: Separate tests

- Run mobile-specific and desktop-specific tests

- Allows device-optimized variations

- Doubles traffic requirements

Recommendation: Start with combined test, segment analysis. Run separate tests only if device behavior differs dramatically.

Common Mistakes to Avoid

1. The Peeking Problem

Mistake: Checking results daily and stopping when p-value < 0.05

Why it's wrong: The more you check, the higher your false positive rate. You'll eventually see p < 0.05 by random chance.

Fix:

- Commit to sample size upfront

- Check results only at predetermined milestones

- Use sequential testing methods if you must peek

- Wait for full sample size before declaring winner

2. Ignoring Weekly Patterns

Mistake: Running test for exactly 10 days or stopping mid-week

Why it's wrong:

- Weekend traffic behaves differently than weekday

- Your test might catch 2 Saturdays but only 1 Friday

- Creates sampling bias

Fix:

- Always run tests in complete weeks (7, 14, 21, 28 days minimum)

- Start tests on the same day of week you plan to end them

- Minimum 1-2 full weeks even if you hit sample size sooner

3. Testing Too Many Variations

Mistake: Running A/B/C/D/E test with 5 variations

Why it's wrong:

- Requires Bonferroni correction

- Massively increases sample size (often 3-4x)

- Splits traffic too thinly

- Each variation gets fewer samples

Fix:

- Limit to 2-3 variations maximum

- Run sequential tests instead

- Focus on testing one hypothesis at a time

- Use multivariate testing only when necessary and with huge traffic

4. Setting Unrealistic MDE

Mistake: Expecting 50%+ improvements as minimum

Why it's wrong:

- Makes tests too easy to "pass"

- Requires tiny sample sizes

- Might miss smaller but still valuable 10-15% wins

Fix:

- Be realistic: most winning tests improve 5-20%

- Set MDE based on business value, not wishful thinking

- Consider: would a 10% improvement be worth implementing?

5. Stopping Tests Early

Mistake: Stopping at 80% of required sample because results look good

Why it's wrong:

- Underpowered test

- Higher false positive rate

- Results likely to regress to mean

Fix:

- Commit to full sample size before starting

- Set calendar reminders for proper end date

- If you must end early, acknowledge increased error risk

Sample Size Benchmarks by Industry

Based on analysis of thousands of A/B tests:

E-commerce

| Metric | Typical Range | Required Sample | Test Duration* |

|---|---|---|---|

| Product page CR | 2-5% | 10,000-20,000 | 14-30 days |

| Add to cart rate | 10-20% | 3,000-6,000 | 7-14 days |

| Checkout completion | 40-60% | 1,000-2,000 | 3-7 days |

*Assuming 1,000 visitors/day

SaaS

| Metric | Typical Range | Required Sample | Test Duration* |

|---|---|---|---|

| Signup conversion | 5-15% | 3,000-8,000 | 10-20 days |

| Free to paid | 2-5% | 10,000-20,000 | 30-60 days |

| Feature adoption | 20-40% | 1,500-3,000 | 5-10 days |

*Assuming 500 visitors/day

B2B/Lead Gen

| Metric | Typical Range | Required Sample | Test Duration* |

|---|---|---|---|

| Form submission | 3-8% | 5,000-15,000 | 20-40 days |

| Demo request | 1-3% | 15,000-40,000 | 60-120 days |

| Content download | 10-25% | 2,000-5,000 | 7-15 days |

*Assuming 300 visitors/day

Media/Publishing

| Metric | Typical Range | Required Sample | Test Duration* |

|---|---|---|---|

| Click-through rate | 5-15% | 3,000-8,000 | 2-5 days |

| Email signup | 2-6% | 8,000-20,000 | 5-12 days |

| Video completion | 30-60% | 1,000-2,000 | 1-3 days |

*Assuming 2,500 visitors/day

Note: These are starting points. Your actual requirements depend on your specific MDE, confidence, and power settings.

Advanced Topics

Sequential Testing (SPRT)

What it is: Statistical method allowing you to peek at results and stop early while maintaining proper error rates.

How it works:

- Set error boundaries that tighten over time

- Can stop when result crosses boundary

- Maintains alpha and beta levels

Benefits:

- Can reduce test duration by 20-50%

- Safe to monitor continuously

- Faster decisions on clear winners/losers

Tradeoffs:

- More complex to implement

- May run longer if results are ambiguous

- Requires specialized tools

Tools: Optimizely, VWO, and custom implementations

Bayesian A/B Testing

Difference from frequentist: Instead of "Is there a difference?", asks "What's the probability variation B is better?"

Benefits:

- More intuitive interpretation

- Can incorporate prior knowledge

- Continuous monitoring without peeking penalty

- Direct probability statements

Tradeoffs:

- Requires setting priors

- Can be seen as subjective

- Less standardized than frequentist

Sample sizes: Generally similar to frequentist, sometimes smaller with strong priors.

Multi-Armed Bandit

What it is: Adaptive testing that automatically shifts traffic to better-performing variations.

How it works:

- Starts with even split

- Gradually allocates more traffic to winners

- Minimizes exposure to losers

When to use:

- Testing many variations (3+)

- Cost of showing losing variation is high

- Traffic is very high

- Willing to sacrifice some statistical rigor for practical gains

Not recommended for:

- Low traffic sites

- Testing two variations

- When you need definitive answers

Tools & Resources

Sample Size Calculators

Our recommendation: WMMW Sample Size Calculator

- Clean interface

- All standard options

- Power analysis charts

- Test duration estimates

- Multiple variation support

Alternative options:

- Evan Miller's calculator (simple, accurate)

- Optimizely's calculator (built-in if using their platform)

- Google Optimize calculator (deprecated but still referenced)

A/B Testing Platforms

Enterprise:

- Optimizely: Full-featured, expensive, great for large teams

- VWO: Mid-market, good features, reasonable pricing

- Adobe Target: Enterprise-only, deep Analytics integration

Mid-Market:

- Convert: Privacy-focused, good for GDPR compliance

- AB Tasty: French company, strong in Europe

- Kameleoon: AI-powered, advanced segmentation

Budget/Small Teams:

- Google Optimize (discontinued)

- Microsoft Clarity + custom implementation

- Open source: Growthbook, Unleash

Statistical Resources

Books:

- "Trustworthy Online Controlled Experiments" by Kohavi, Tang, Xu

- "A/B Testing: The Most Powerful Way to Turn Clicks Into Customers" by Siroker & Koomen

Online Courses:

- Udacity: A/B Testing by Google

- CXL: Advanced A/B Testing & Experimentation

Conclusion

Sample size calculation isn't guesswork—it's statistics. And getting it right is the difference between making data-driven decisions and random ones.

Key Takeaways:

- Don't wing it: Calculate required sample size before starting any test

- Be realistic: Set achievable MDEs (10-20% improvements)

- Commit to the number: Don't stop early or peek continuously

- Account for patterns: Run tests in complete weeks (minimum 1-2)

- Use tools: Let calculators do the math for you

Ready to calculate your sample size?

Use Our Free Sample Size Calculator →

It takes 30 seconds and ensures your next test produces reliable, actionable results.

Stop guessing. Start testing with confidence.

Related Resources:

- Conversion Rate Calculator - Calculate your baseline

- ROI Calculator - Estimate value of improvements

- Marketing ROI Calculator - Track test ROI

Questions? Drop a comment below or contact our analytics team.

Share this insight

Help your network discover smarter analytics.